AI Water & Energy Usage: ChatGPT vs Claude vs Gemini (2026)

Key takeaways

-

AI is driving explosive growth in data centre electricity and water demand, with inference loads multiplying faster than web traffic ever did.

-

A standard AI query (GPT-4o, Gemini) uses roughly the same electricity as a Google search (~0.3-0.4 Wh), but reasoning models like o3 or DeepSeek-R1 can consume 10-70x more per prompt.

-

The efficiency gap between AI models spans over 200x, choosing the right model is itself a sustainability decision.

-

TikTok or Netflix sessions still carry heavier carbon footprints per minute than most AI prompts, but scaling matters: billions of queries per day reshape the baseline.

-

The big four hyperscalers (Microsoft, AWS, Google, Meta) lead in sustainability metrics, but diverge in transparency, water usage, and carbon offset practices.

-

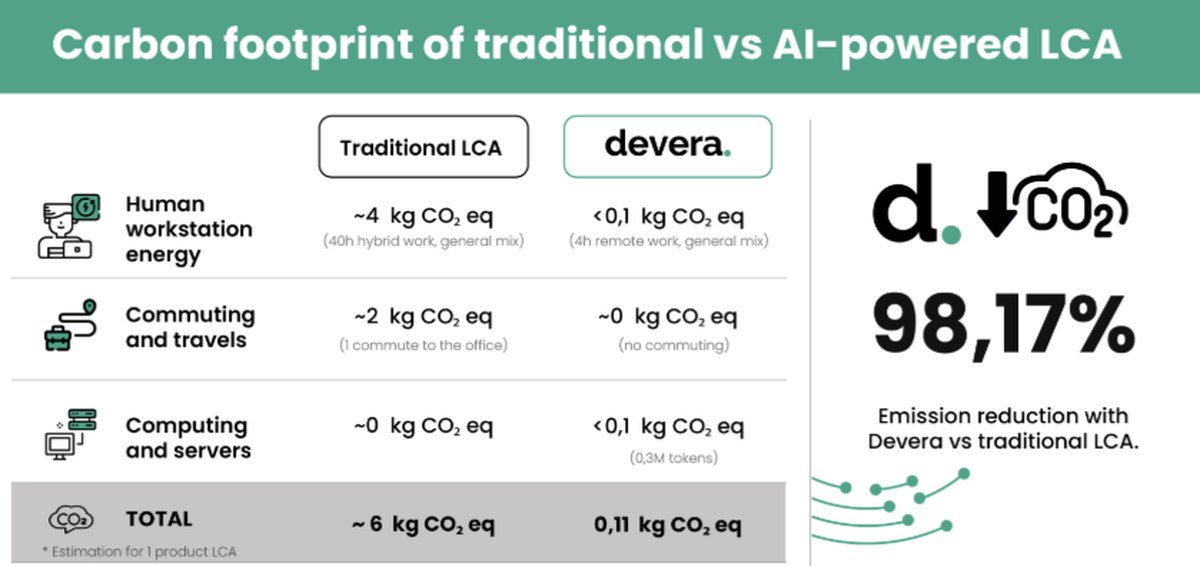

Well-designed AI processes can drastically reduce environmental impact compared to traditional methods, as shown by Devera’s 50x lower carbon footprint per LCA.

-

AI workloads make water the new frontier: several providers now aim to be water-positive by 2030, with zero-water cooling emerging as a key design principle.

AI systems are fast becoming one of the biggest new energy and water consumers in digital infrastructure. While the impact of video streaming and social media has been studied for over a decade, the recent surge in large language models (LLMs) and generative AI introduces a new class of persistent, high-intensity workloads. In this article, we break down the measurable footprint of AI from model training to inference and compare it to other everyday digital activities.

We’ll also take a close look at the data centre strategies behind the world’s largest AI platforms, examining how Microsoft, AWS, Google and Meta are addressing the growing tension between performance, sustainability and transparency.

How much energy does AI actually use?

Global data centre electricity use is expected to reach 945 TWh by 2030, up from 415 TWh in 2024. A large share of this growth comes from the rising demand for AI inference, especially from generative models. Estimates suggest that AI alone may account for 652 TWh (69%) by 2030, nearly an 80-fold jump from 2024 levels.

Table 1: Estimated electricity use by AI (global)

| Year | Estimated AI electricity use | Source |

|---|---|---|

| 2022 | 23 TWh | IDC |

| 2030 | 652 TWh (projected) | Utility forecast |

This growth is being fuelled by the rollout of energy-intensive GPUs. A single Nvidia H100 draws up to 700 watts, and an eight-GPU node can reach 5.6 kW. Full racks of AI compute can exceed 240 kW, forcing a redesign of cooling infrastructure and power provisioning in modern data halls.

How much energy and carbon footprint an AI query uses?

A comprehensive academic benchmark (arXiv:2505.09598) tested 30 models across short, medium and long queries, revealing dramatic differences. The gap between the most and least efficient models spans over 200x: LLaMA-3.2-1B consumes just 0.07 Wh, while DeepSeek-R1 reaches 23.8 Wh for an equivalent short prompt. Google’s Gemini 2.5 remains impressively efficient at 0.24 Wh, with Google reporting a 33x efficiency improvement over the past 12 months. Reasoning models (o3, o1, DeepSeek-R1) consume dramatically more than standard models due to extended chain-of-thought processing, and the cost scales steeply with longer queries.

These differences don’t just affect cloud costs, they reshape the environmental equation, especially when scaled across billions of requests. The carbon impact per prompt is no longer a fixed constant, but a design choice tied to model architecture, chip efficiency, and infrastructure.

Running the model: energy per user request

| Model | Short query* (Wh) | Medium query** (Wh) | Long query*** (Wh) |

|---|---|---|---|

| LLaMA-3.2-1B (Meta) | 0.07 | 0.22 | 0.34 |

| LLaMA-3-8B (Meta) | 0.09 | 0.29 | , |

| GPT-4.1 nano (OpenAI) | 0.10 | 0.27 | 0.45 |

| LLaMA-3.2-3B (Meta) | 0.12 | 0.38 | 0.57 |

| Gemini 2.5 (Google) | 0.24 | , | , |

| LLaMA-3.3-70B (Meta) | 0.25 | 0.86 | 1.65 |

| GPT-4o (OpenAI) | 0.42 | 1.21 | 1.79 |

| GPT-4o mini (OpenAI) | 0.42 | 1.42 | 2.11 |

| LLaMA-3-70B (Meta) | 0.64 | 2.11 | , |

| o1-mini (OpenAI) | 0.63 | 1.60 | 3.61 |

| Claude 3.7 Sonnet (Anthropic) | 0.84 | 2.78 | 5.52 |

| o3-mini (OpenAI) | 0.85 | 2.45 | 2.92 |

| GPT-4.1 (OpenAI) | 0.92 | 2.51 | 4.23 |

| LLaMA-3.1-70B (Meta) | 1.10 | 3.56 | 11.63 |

| GPT-4 Turbo (OpenAI) | 1.66 | 6.76 | 9.73 |

| GPT-4 (OpenAI) | 1.98 | 6.51 | , |

| LLaMA-3.1-405B (Meta) | 1.99 | 6.91 | 20.76 |

| o3-mini high (OpenAI) | 2.32 | 5.13 | 4.60 |

| o4-mini high (OpenAI) | 2.92 | 5.04 | 5.67 |

| Claude 3.7 Sonnet ET (Anthropic) | 3.49 | 5.68 | 17.05 |

| DeepSeek-V3 (DeepSeek) | 3.51 | 9.13 | 13.84 |

| o1 (OpenAI) | 4.45 | 12.10 | 17.49 |

| GPT-4.5 (OpenAI) | 6.72 | 20.50 | 30.50 |

| o3 (OpenAI) | 7.03 | 21.41 | 39.22 |

| DeepSeek-R1 (DeepSeek) | 23.82 | 29.00 | 33.63 |

* Short: ~100 input → 300 output tokens. ** Medium: ~1k → 1k tokens. *** Long: ~10k → 1.5k tokens. ”, ” = not tested due to context limits. Source: arXiv:2505.09598 (Jegham et al., 2025). Gemini data from Google Cloud Blog.

Carbon footprint: it depends on where the model runs

CO2 per query depends not only on energy but on the carbon intensity of the provider’s grid. The paper uses provider-specific emission factors rather than global averages, reflecting the actual infrastructure behind each API:

| Provider | Host | Carbon intensity (g CO2/kWh) | PUE |

|---|---|---|---|

| Google Cloud | ~50-100 (renewable PPAs) | 1.10 | |

| OpenAI | Azure (US) | 353 | 1.12 |

| Anthropic | AWS (US) | 385 | 1.14 |

| Meta (Llama) | AWS (US) | 385 | 1.14 |

| DeepSeek | Own infra (China) | 600 | 1.27 |

This means a DeepSeek-R1 query produces roughly 14.3 g CO2 (23.8 Wh × 0.6 kgCO2/kWh), while the same query on a cleaner grid would produce far less. For comparison, using the global-average grid intensity of 430 g CO2/kWh (IEA 2025, down from 445 in 2024):

| Model | Energy (Wh) | CO2 provider grid (g) | CO2 global avg (g) |

|---|---|---|---|

| GPT-4.1 nano | 0.10 | 0.04 | 0.04 |

| Gemini 2.5 | 0.24 | 0.03* | 0.10 |

| GPT-4o | 0.42 | 0.15 | 0.18 |

| Claude 3.7 Sonnet | 0.84 | 0.32 | 0.36 |

| GPT-4.1 | 0.92 | 0.32 | 0.40 |

| o3 | 7.03 | 2.48 | 3.02 |

| DeepSeek-R1 | 23.82 | 14.29 | 10.24 |

* Google’s self-reported figure based on their renewable energy procurement; significantly lower than global-average estimate.

Eco-efficiency ranking: performance vs environmental cost

Not all heavy models deliver proportionally better results. The paper applies cross-efficiency Data Envelopment Analysis (DEA) to rank models by how much performance they deliver per unit of environmental cost:

| Rank | Model | Eco-efficiency score |

|---|---|---|

| 1 | Claude 3.7 Sonnet | 0.886 |

| 2 | o4-mini (high) | 0.867 |

| 3 | o3-mini | 0.840 |

| 4 | GPT-4.1 mini | 0.802 |

| 5 | GPT-4o | 0.762 |

| … | LLaMA models | ~0.5 |

| 27 | DeepSeek-V3 | 0.060 |

| 28 | DeepSeek-R1 | 0.058 |

Claude 3.7 Sonnet tops the eco-efficiency ranking, delivering strong benchmark performance while consuming only 0.84 Wh per short query. Reasoning models like o4-mini and o3-mini also rank well because they balance extended thinking with smaller model sizes. At the bottom, DeepSeek models score lowest due to the combination of high energy use and carbon-intensive Chinese grid infrastructure.

How do AI queries compare to other digital habits?

For standard inference, OpenAI disclosed that a typical ChatGPT query consumes ~0.34 Wh, while academic benchmarks place GPT-4o closer to 0.42 Wh for a short exchange. This aligns AI prompts more closely with Google searches (~0.3 Wh), but well below short-form video or streaming. Reasoning models (o3, DeepSeek-R1) are a different story entirely, they can consume 10-70x more than a standard query.

Electricity and CO2 footprint of common digital actions (10 units)

| Activity | Energy (Wh) | CO2 (g) [global avg] |

|---|---|---|

| Google Search x10 queries | 3.0 Wh | 1.3 g |

| ChatGPT x10 queries (standard)† | 3.4–4.2 Wh | 1.5–1.8 g |

| Netflix x10 min | 12.8 Wh | 5.7 g |

| TikTok x10 min | 66 Wh | 29.2 g |

| ChatGPT o3 x10 queries (reasoning) | 70.3 Wh | 30.2 g |

† Range reflects OpenAI’s disclosed 0.34 Wh average vs academic benchmark of 0.42 Wh for GPT-4o.

While a single AI query may consume relatively little energy, the story changes at scale, billions of daily requests quickly add up to significant power demands. That’s why it’s crucial to benchmark AI workloads against familiar digital habits. Comparing them to activities like Google searches, video streaming, or social media use helps contextualize their environmental impact and makes the discussion more tangible for users, policymakers and infrastructure planners alike.

What about training? The big hit before deployment

Training large foundation models remains the most resource-intensive phase of the AI lifecycle. While inference dominates total energy use at scale (billions of daily queries), a single training run can consume more electricity than a small town uses in a year.

Training impact of selected LLMs

| Model | Training energy | Estimated CO2 | Cooling water |

|---|---|---|---|

| GPT-3 (175B params, 2020) | 1.287 GWh | ~552 t CO2e | 5.4 million L |

| LLaMA-3-70B (70B params, 2024) | ~6.4 GWh | ~2,600 t CO2e | Meta-disclosed |

| GPT-4 (est. 1.7T params, 2023) | ~52-62 GWh | ~12-15 kt CO2e | Unknown |

| GPT-5 (est., 2025) | ~80-100 GWh (est.) | ~20-30 kt CO2e | Unknown |

Training happens less frequently than inference, but its energy and water use per run can rival or exceed some small countries’ weekly usage. The trend is clear: each generation consumes significantly more, though efficiency gains in hardware (H100 vs A100) partially offset the growth.

Water: the hidden cost of generative AI

Inference workloads also require significant water, primarily for cooling. Estimates show that each ChatGPT query uses 0.32 mL of water, a drop individually, but enormous at scale. Microsoft’s West Des Moines campus, for example, consumes ~43 million L/month in summer, representing 6% of the city’s total draw.

Researchers project that AI-driven data centres could withdraw up to 6.6 billion m3 of freshwater by 2027, more than four times Denmark’s annual usage.

Global outlook

AI could withdraw 4.2 - 6.6 billion m3 of freshwater in 2027, evaporating 0.38 - 0.60 billion m3 outright. That’s more than the annual freshwater withdrawal of four to six Denmarks.

Case study: Microsoft in West Des Moines

The company’s Iowa campus peaks at 11.5 million gallons/month (~43 million L) during summer, about 6% of the city’s total usage.

Corporate response

To tackle this, Microsoft now implements a closed-loop cooling system that eliminates evaporative losses, saving >125 million L per datacentre per year.

Data centers: the cloud wars go green

The four major hyperscalers Microsoft Azure, Amazon Web Services (AWS), Google Cloud, and Meta, present the most comprehensive and measurable sustainability strategies in the market. Other providers (Oracle Cloud, IBM Cloud, etc.) share similar goals but currently publish fewer operational metrics or have set less ambitious climate targets.

Let’s compare the main hyperscalers by sustainability metric.

Sustainability snapshot of major AI/cloud providers (2025)

| Provider | Carbon Goal | Renewable Energy | PUE | Water Goal | WUE |

|---|---|---|---|---|---|

| Meta | Net-zero 2030 | 100% since 2020 | 1.08 | Replenish 200% in high-stress areas | 0.20 L/kWh |

| Net-zero 2030 | 100% annually, 64% CFE 24/7 | 1.10 | Replenish 120% of water | ~1 L/kWh | |

| Microsoft | Carbon-negative 2030 | 100% target reached 2025 | 1.12 | Water-positive 2030 | ND (no water in new designs) |

| AWS | Net-zero 2040 | 100% since 2023 | 1.15 | Water-positive 2030 (53% progress) | 0.15 L/kWh |

Microsoft Azure

Climate roadmap: Carbon negative, water positive, and zero waste by 2030; 100% renewable electricity target reached in 2025.

Operational efficiency: Average PUE of 1.12 in next-gen data centers, outperforming the industry average of 1.4-1.6.

Innovations:

- Waterless cooling and liquid immersion systems that cut GHG emissions by 15-21% and reduce water usage by 31-52%.

- Hydrogen fuel cells for 3 MW zero-emission backup power.

Challenges: Despite water-saving designs, rising water consumption in Texas has led to local opposition.

Amazon Web Services (AWS)

Energy: Reached 100% renewable electricity matching in 2023; net-zero target for 2040.

Efficiency: Global PUE of 1.15 (best site at 1.04).

Water: Water Positive by 2030 initiative; global WUE of 0.15 L/kWh (down 40% since 2021).

Innovations:

- Use of recycled water and community water recharge projects; reached 53% of 2030 water goal by 2024.

- Investment in modular nuclear reactors to decarbonize electricity in low-renewable regions.

Challenges: Heavy reliance on Renewable Energy Certificates (RECs) and surging AI workloads complicate real emission reductions.

Google Cloud

“24/7 Carbon-Free Energy (CFE)” strategy: Operate every data center with hourly carbon-free energy by 2030; reached 64% globally in 2024 (10 regions at >=90%).

Efficiency: Average PUE of 1.10; publishes detailed campus-level data.

Water: Commitment to replenish 120% of freshwater consumed and improve local watersheds.

Innovations & challenges:

- Secured geothermal, battery, and nuclear contracts to support 24/7 CFE goals.

- AI-driven growth raised water footprint 17% in 2023, sparking transparency concerns.

Meta (Facebook)

Energy: 100% renewable since 2020; net-zero across full value chain by 2030.

Efficiency: PUE of 1.08 and WUE of 0.20 L/kWh; all facilities LEED Gold certified.

Water: Return 200% of water in high-stress regions, 100% in medium-stress regions.

Innovations: 150 MW geothermal agreement in New Mexico; exploring micro-nuclear for AI workloads.

Challenges: Discrepancy between location-based and market-based emissions raises questions about RECs’ actual impact.

Other Providers

Key Sustainability Highlights

| Provider | Sustainability Details |

|---|---|

| Oracle Cloud Infrastructure (OCI) | 100% renewables by 2025; net-zero by 2050; PUE of 1.30 in most efficient region. Uses rain capture and xeriscaping to reduce water needs. |

| IBM Cloud | 75% renewables by 2025 (90% by 2030), net-zero by 2030. Promotes on-site solar and water management through its Sustainability Accelerator. |

| Emerging Hyperscale/Colocation | The EU’s Climate Neutral Data Centre Pact targets PUE <=1.3 and water use < 2.5 L/kWh by 2025. Providers like Switch and CyrusOne already deploy zero-water cooling systems. |

Key sustainability highlights of Data Centers:

- Microsoft and Google lead with the most aggressive climate roadmaps (carbon negative / 24/7 CFE) and innovations in waterless cooling and carbon-free backup power.

- AWS excels in rapid renewable deployment and was the first to launch a water-positive target, though its net-zero goal is set a decade later (2040).

- Meta offers the best operational PUE-WUE ratios and runs entirely on renewables, but its explosive AI expansion strains sustainability performance.

- Oracle, IBM, and others are progressing but show less transparency and have longer-term goals.

Environmental impact of LCA AI-powered with Devera

Each product footprint calculated with Devera avoids hours of manual consulting, unnecessary meetings, and carbon-heavy travel. But it also triggers:

- A range of 0.03M to 0.4M tokens processed for each LCA.

- Several seconds of high-intensity computation.

- And a short but meaningful spike in energy usage inside a data center powered by AWS (where Anthropic’s Claude models run).

Using the data from our benchmarks above, a typical Devera analysis using Claude Sonnet consumes approximately 0.8-5.5 Wh depending on query complexity, equivalent to running a microwave for 1-5 seconds.

To be clear, Devera’s footprint is small compared to massive AI labs, but the direction matters. If we’re not careful, we could end up solving environmental problems while contributing silently to another one.

From our assumptions, an LCA from a traditional consultant requires more than 50 times more energy than an AI-based LCA with Devera, factoring in human workstation energy, commuting, office infrastructure, and the multi-week timeline of manual analysis.

Conclusion: AI is reshaping the sustainability equation

The environmental footprint of AI is complex, dynamic and still evolving. Per-query efficiency is improving fast, Google reports a 33x improvement in just 12 months, but the sheer scale of demand is growing even faster. The 200x efficiency gap between the lightest and heaviest models means that model selection is itself a sustainability decision, one that developers, companies and users make billions of times per day.

Reasoning models like o3 and DeepSeek-R1 have introduced a new tier of energy consumption that rivals video streaming on a per-interaction basis. And infrastructure matters as much as architecture: the same model running on Chinese grid infrastructure (DeepSeek) produces roughly 70% more CO2 than on US hyperscaler grids.

Leaders like Microsoft and Google are pioneering zero-water cooling and hourly carbon tracking, but the road to net-zero AI will require deeper transparency, stricter regulation, and better workload design.

If AI is going to be the new electricity, then it must also follow the same rules: measured, monitored and decarbonised at source.